Optimization is a full time job!

16/Sep 2009

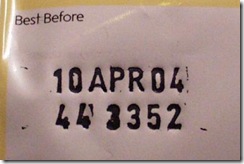

From time to time, when I have a spare moment, I like to run the game under profiler and see what’s changed since the last test. I do it every 3-4 weeks, roughly, and the basic conclusion is that code is like food - it has an expiration date, later it rots. It doesn’t  really matter that just 2 weeks ago you eliminated 1000 cache misses, now they’re back, in a different place perhaps, but total number of misses per frame is almost the same again. Same with other characteristics, including FPS. Remember those 2 frames per second you shaved recently? Kiss them goodbye. Honestly, I don’t see an easy solution here, but first step is to even admit we have a problem. I’ve worked in places where no one really cared about performance to the very end of a project where suddenly it’d became a major problem’ Thing is, 3 months before a release it may be a little bit too late. Let’s assume optimistically that we admit that optimization is crucial and should be performed continuously throughout project lifecycle. What are our options?

really matter that just 2 weeks ago you eliminated 1000 cache misses, now they’re back, in a different place perhaps, but total number of misses per frame is almost the same again. Same with other characteristics, including FPS. Remember those 2 frames per second you shaved recently? Kiss them goodbye. Honestly, I don’t see an easy solution here, but first step is to even admit we have a problem. I’ve worked in places where no one really cared about performance to the very end of a project where suddenly it’d became a major problem’ Thing is, 3 months before a release it may be a little bit too late. Let’s assume optimistically that we admit that optimization is crucial and should be performed continuously throughout project lifecycle. What are our options?

60 FPS rule. I’ve written about this a little bit when discussing God of War postmortem. You choose your FPS target and try to stick to it. Display current frame counter in a big font at all times. The higher target, the better it should work as even little screw-ups will affect the framerate (at 16.67ms per frame, there’s not much place for sloppiness). Sounds like a good plan, but it needs to be leading philosophy for whole project or will be dropped sooner or later. Easier said than done. In my experience people often invest lots of time in creating complicated test systems, where you can auto-play few levels, record average/min/max framerate, memory usage and so on, generate pretty reports, full package. What they (well, we, because I’ve been there as well) don’t realize that it’s only one of steps, I dare to say - the easy one. Hard part is to convince people they should care about it. Otherwise, it’d still slowly degrade’ OK, so the framerate went down a little bit, but that’s not my problem, right? Someone else surely will fix it. If not, it’s not a tragedy anyway, we’re talking 0.3 FPS less here. Two months later you’re running at 10 FPS less. At one of my previous companies we wrote rather nice texture viewer, where you could cycle through all the textures in the scene, see them in scene, tint mipmaps and so on. We implemented it, put it in game and kinda expected that people would start to use it. I don’t think anyone ever executed it, not sure if folks were even aware of its existence.

Even if you’ll manage to convince company as a whole that they should care about performance - it may not be enough. Usually, with group responsibility things get blurry. One rule could be: after every change make sure that it doesn’t degrade performance. If it does - it is your task to fix it (or find someone who will do it).

Another solution would be to make someone responsible exclusively for performance. It’d be his task to launch the game every day, profile it and make sure we’re still on track. This may seem wasteful and it’d probably hard to convince management that it can be a full time job’ It may not be 100% of the time, but you can always plan for example 2 days every week to spend on profiling & optimization. Not even sure if one person is enough here, but it depends on a specific case. Someone who’s familiar with both CPU & GPU details could probably manage (but it’s quite rare), otherwise it’d have to be divided betwen specialists in those areas. It may not be the most ‘flashy’ job, but it can be very rewarding. On the one hand you know that no one will notice that you’ve just eliminated 1k cache misses, on the other - it still feels good. There’s something cool in watching ‘Top Issues’ disappearing from PIX table one by one. The highest level of deviation is when you check-in the change that doesn’t really result in any measurable gain, but performance graphs ‘look’ more elegant/cleaner/reasonable :). Sadly, in my experience there are not many companies that see need for position like that. People still prefer to think that optimization is something you do after alpha. Before that everyone should be busy with adding features as quickly as possible, at the end we’ll reduce a draw range or downgrade some textures and we’ll be fine. No wonder there are so many postmortems with people describing how the game was running in 10 FPS just 4 months before release’

Old comments

Kris Taeleman 2009-09-21 18:44:24

While having a big ass fps counter and memory stats definitely help, it’s still a matter of changing the mentality of 99% (maybe less, but just trying to make a point :) ) of the coders out there. From my experience, most of them don’t care or have the “it’s not my code so it’s not my problem” mentality. The guy who cares usually gets swamped with those problems and ends up spending months trying to course correct.

It’s the responsibility of the lead to enforce respecting those rules and let the implementer revisit it until its done. This educates those people and prevents those same mistakes to persist during the project, but it might take some time to “educate” them in the beginning.

And I agree that his is not a matter of premature optimization, it’s a matter of keeping your game in a healthy playable state during the whole of the project, which in the end improves gameplay/stability/… and in the end your scores.

admin 2009-09-21 18:40:10

To elaborate a little bit and also related to peirz comment. Features do change and sometimes they enter codebase in beta-state, when it probably isn’t wise to spend much time optimizing them. In my experience it usually doesn’t last too long, though and at some point decision has to be made whether it stays in (roughly) current state or not. If it does – time to make sure we’re still within budget. It’s still possible it’ll be removed/modified later, so work is wasted, but that’s how it works in this business, nothing is final until the game is on shelves.

Liam 2009-09-21 18:04:47

@Branimir Wow there horsey! It looks like I upset you.

Notice the [b]little[/b] in my post?

So I take it you are saying re-factoring is BS and never happens? Features are not changed and there is no wasted time.

Here is a quote, which at the moment I can not credit to the author, it goes “You never know what game you are making, until it is made.”

I was merely asking for any tips, if I was asking how to curse then your reply would be satisfactory.

“If your feature works, but doesnâ??t fit in budget, youâ??re not done yet.” Ok.

Branimir Karadzic 2009-09-20 18:03:20

One easy way to track memory leaks on daily basis is to get atexit memory dump. This practice also helps with thread shutdown, static destructors cleanup, and other stuff that programmers that exiting thru debugger (shift-f5) don’t think about. By maintaining clean exit, you’ll probably find all dumb memory leaks (and deadlocks on thread join, order of construction and dependence problems, etc.), and then you can just work on ones that are actually properly cleaned up, but are still leaking during the runtime. :)

admin 2009-09-20 17:31:18

Hah, don’t get me started on memory leaks. I still have nightmares from one of my previous projects. When I first started tracing leaks (only because we started to run out memory), just loading the level and exiting the game would result in 200 thousands blocks leaked. After three weeks I was ready to scrap my eye with spoon, it’d be more fun than this. Anyway, I got it to some reasonable amount (application level leaks only). Few months later it was almost the same as at the very beginning.

peirz 2009-09-19 22:40:03

I think what Liam was getting at is that it’s a waste of everybody’s time to optimize code that isn’t going to be around for long, or at least not in its current form.

I totally see the blog’s point though, substitute “cache misses” with “memory leaks” and you got my last project. There’s no magic, just two steps; first make the problem visible, just like you have the big ass fps counter, show any other metric that you want optimized. And two, make sure that management agrees that this is a priority. They don’t have to set up a 100% full time cop position, but at least they should allow (or encourage) the team to fix these things when they see them.. instead of prioritizing new features over everything else and putting “fixing things” at the end. I still have some hope that, if people don’t fix these problems right away, it’s not because they’re bad people, just that management wants them to do too many other things first.. :)

Michal I. 2009-09-19 20:18:10

Ahh, the famous mipmap tinting! Very popular feature back then :) If I remember correctly, we wrote it three times. And each time, no one was aware of previous implementations…

admin 2009-09-19 18:48:53

Branimir explained it better than I would, so I’ll just add my 2 SEK. I’m not saying you’ve to squeeze cycles and hand-optimize everything in assembly from day one, but everyone should work keeping optimization in mind. You should probably start with establishing time/memory budgets for different subsystems and try to fit in those at every stage of development. No “we’ll fix it later” bullshit, as it’s a recipe for disaster and Sundays spent at work. If your feature works, but doesn’t fit in budget, you’re not done yet.

Liam 2009-09-19 15:08:44

Interesting although it sounds a little like premature optimisation to me. It may well lead to optimisation on features which are re-factored at a later stage, removed all together or only preformed on what are currently showing as bottle necks which maybe a false positive.

Is there any insight you can provide to prevent this?

Or do you feel the benefits of constant optimisation outweigh the unproductive time wasted?

Branimir Karadzic 2009-09-19 16:51:14

@Liam: “premature optimization” excuse is bullshit. It’s bullshit because everyone using it as excuse to not understand how their code actually maps to hw, excuse to not learn how compiler transforms their code to run on hw, and be able to carelessly write bunch of crap code, etc. It’s bullshit because if you spend enough time not thinking how your code actually works on hw, and you keep bullshiting with high level abstractions, coupling classes and other nonsense that will eventually become so complex that it will be dangerous for project if anyone touches it. It’s bullshit because no one ever goes back seriously and rewrite their code to be actually optimal. Way how that non-premature optimized code gets optimized is to figure out how to not go thru that code path and then cut stuff that causes code to choose to go thru that code path. A month before product ships it sounds like this “AI is slow, go cut active NPCs on that level”, “animation is slow, oh cut number of characters you can see”, “rendering is slow, just don’t render as much”, etc. And that’s 90% how non-premature optimized code is getting optimized in average game studio.

All projects I’ve seen it goes like this, from project start to 3 months before product is shipped you hear “premature optimization is root of all evil”. From 3 months before product is shipped until is shipped you hear “we don’t have time now to optimize, do whatever you can to get us to 30 FPS”.

With bad FPS thought the project, it doesn’t show your team where they actually are. In 10FPS you can’t figure out if game is fun.

atexit 2010-04-05 14:45:58

[…] and call stdlibc exit(). This allows atexit to do its work, garbage collection and some of the …Optimization is a full time job! | .mischief.mayhem.soap.From time to time, when I have a spare moment, I like to run the game under profiler and see what's […]